5-Min Brief: Stanford Just Published AI's Annual Report Card. Here's What It Says.

What you need to know — in 30 seconds

- Stanford University's Human-Centered AI institute publishes a comprehensive "State of AI" report every year — it's the closest thing the field has to an official annual checkup

- The 2026 edition came out yesterday and it's over 400 pages long, so we read it so you don't have to

- The headline: AI is getting dramatically better, dramatically faster, and the world is struggling to keep up

- People are adopting AI faster than they adopted the personal computer or the internet — and most of the systems meant to govern it haven't caught up

Every year, Stanford's Human-Centered AI institute publishes what has become the definitive annual report on where AI stands. It's comprehensive, data-driven, and genuinely useful for cutting through the hype in both directions — whether the hype is "AI will solve everything" or "AI is all smoke and mirrors."

The 2026 edition dropped yesterday. Here are the things worth knowing.

The models keep getting dramatically better

Remember when passing a hard exam felt like a major AI milestone? The goalposts keep moving because the models keep clearing them.

One benchmark the report tracks is called "Humanity's Last Exam" — a collection of extraordinarily difficult questions across science, math, and humanities, contributed by subject-matter experts specifically designed to be hard enough to stump AI. When it launched in 2025, the best AI model answered just 8.8% of questions correctly.

As of this month, the best models are topping 50%.

That's not a small improvement. That's going from "barely functional" to "getting more than half of the hardest questions in every field right" in roughly a year. The report notes the top performers right now are Anthropic's Claude Opus 4.6 and Google's Gemini 3.1 Pro.

The researchers are careful to add an important caveat though: benchmark scores don't always translate to real-world results. A model that scores 75% on a legal reasoning benchmark might still be unreliable in an actual law practice. The gap between test performance and practical usefulness is one of the report's recurring themes.

Generative AI reached 53% global population adoption within three years — faster than the personal computer or the internet

Here's a number that puts the current moment in perspective: people are adopting AI tools faster than they adopted the personal computer and faster than they adopted the internet.

Both of those technologies reshaped civilization. The fact that AI is moving faster than either of them is either exciting or alarming depending on your disposition — probably both.

The report tracks AI adoption across industries and geographies and finds that it's genuinely broad. It's not just tech companies. Healthcare, finance, education, manufacturing, and government are all deploying AI at scale. The question the report keeps returning to is whether the infrastructure — regulatory, legal, ethical, educational — is keeping pace with the technology. The short answer is: not really.

AI is boosting productivity, but unevenly

The productivity gains from AI are real, but they're not uniform and they're not fully understood yet.

The report cites research showing AI is boosting productivity by 14% in customer service roles and 26% in software development. Those are meaningful numbers — a quarter more output from the same number of people in software development is significant.

But — and this is important — those gains don't show up in tasks requiring more judgment, nuance, or contextual understanding. The more a job requires reading a room, navigating ambiguity, or making decisions with incomplete information, the less AI has helped so far.

The report includes one data point that perfectly captures AI's strange blind spots: the best AI model in the world reads an analog clock correctly just 50.1% of the time. Barely better than a coin flip. A system that can answer PhD-level physics questions can't reliably read a clock."

This maps to something we've covered before: AI is excellent at pattern-matching tasks and less reliable at tasks that require genuine understanding of messy, real-world situations. The productivity gains are concentrated in the parts of knowledge work that are more routine and predictable.

The US-China AI race is closer than most people think

This one might surprise you.

Most coverage of the AI race between the US and China assumes the US is comfortably ahead. The Stanford report suggests the gap is considerably narrower than that.

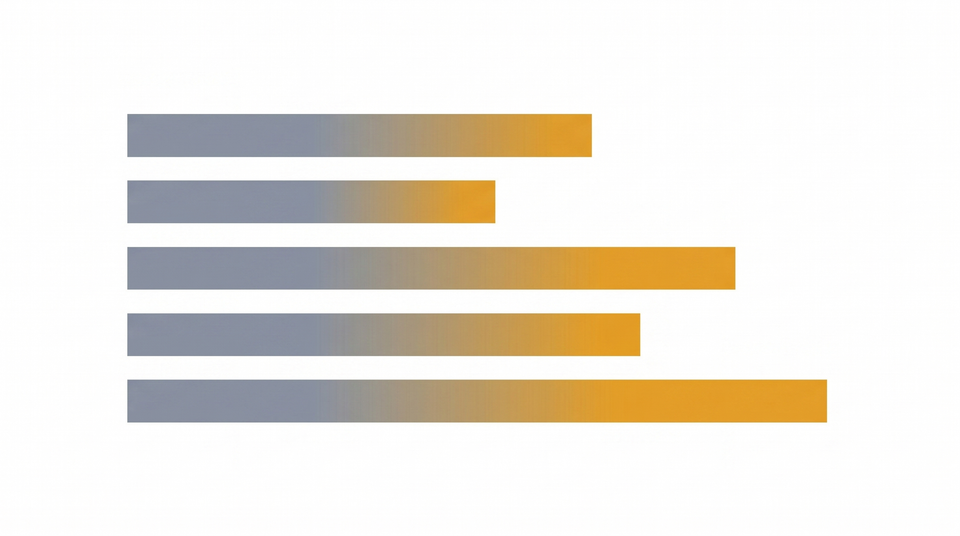

According to Arena — a platform that lets users compare AI models on identical prompts — the US had a clear lead in early 2023 with ChatGPT. That gap narrowed through 2024. By early 2025, China's DeepSeek briefly matched the top US model. As of March 2026, US models lead but Chinese models like DeepSeek and Alibaba's AI lag only modestly.

The report notes the US and China have different AI strengths — the US leads on frontier model development and research, China leads on deployment scale and manufacturing integration. Neither country has a clean overall advantage.

The systems meant to govern AI aren't keeping up

Perhaps the most important finding in the whole report isn't about capability — it's about governance.

The benchmarks used to measure AI are struggling to keep up. The policies meant to regulate it are lagging. The job market hasn't figured out how to handle AI-driven displacement. The legal system hasn't resolved who's responsible when AI causes harm.

None of this is surprising, but the report quantifies it in ways that are useful. Technological progress in AI is measured in months. Policy and legal progress is measured in years. That gap — between what AI can do and what society has decided to do about it — is the central challenge of this moment.

The short version

AI got significantly better this year. People are using it faster than any technology in history. The productivity gains are real but uneven. The US-China race is closer than headlines suggest. And the systems we've built to manage all of this haven't caught up yet.

That's where we are in April 2026. Same time next year, the numbers will look different again.

Enjoying HumanReadable-AI? This is exactly the kind of story we cover every day — the big picture, explained clearly. Subscribe below for the daily briefing, free.