Deep Dive: What Is AI, Actually?

What you need to know — in 30 seconds

- Anthropic built a new AI model called Claude Mythos that can find hidden security vulnerabilities in software — and then figure out how to exploit them

- It found thousands of these vulnerabilities in basically every major operating system and web browser, including bugs that had been sitting undetected for up to 27 years

- Anthropic is NOT releasing it to the public — instead they're using it to quietly fix problems before anyone can misuse it

- This is either a really good thing or a genuinely alarming thing, depending on how the next few years go

Let's start with a quick vocabulary lesson, because two words matter here: vulnerability and exploit.

A vulnerability is a flaw in software. A crack in the wall. It exists, it's a problem, but by itself it just sits there. An exploitis what happens when someone figures out how to use that crack to break in. One is a problem on paper. The other is a problem in real life.

Security researchers have spent decades finding vulnerabilities. That part is hard but doable. Turning a vulnerability into a working exploit — actually figuring out how to use it to break into a system — that's the part that requires real expertise and usually takes skilled humans days or weeks.

An AI just started doing both. Autonomously. In hours.

What actually happened

Anthropic — the company behind the Claude AI assistant — has been quietly testing a new, unreleased model called Claude Mythos. This week they went public with what it can do, and the cybersecurity world promptly had a small collective freakout.

Claude Mythos Preview can autonomously identify zero-day vulnerabilities and then construct working exploits across every major operating system and major web browser. Zero-day means previously unknown — nobody knew these bugs existed.

The numbers are striking. In just the past few weeks, Anthropic says its Mythos Preview has identified thousands of zero-day vulnerabilities, many of which were critical and difficult to detect, including some in every major operating system and web browser. Several of the vulnerabilities discovered using the model had existed undetected for years — the oldest being a 27-year-old bug in OpenBSD.

Twenty-seven years. That bug was sitting there since 1999, through countless security audits, through entire careers of professional security researchers examining the same code, and an AI found it in a matter of hours.

One example that made the rounds: in FreeBSD, Mythos Preview autonomously identified and fully exploited a 17-year-old remote code execution flaw, granting unauthenticated root access — without any human involvement after the initial prompt. "Root access" means complete control of the machine. "Unauthenticated" means you don't need a password or login. Just… in.

Here's the part Anthropic wants you to pay attention to

They're not releasing this thing.

Instead, they launched something called Project Glasswing — a coalition of tech companies including Microsoft, Apple, Google, Cisco, and about 40 others. The idea: use Mythos to find these vulnerabilities and get them fixed before bad actors can use similar capabilities to find and exploit the same holes.

Project Glasswing partners will receive access to Claude Mythos Preview to find and fix vulnerabilities or weaknesses in their foundational systems — systems that represent a very large portion of the world's shared cyberattack surface.

The logic is: if AI is going to be capable of this, better to get ahead of it defensively now, before that capability is widely available.

The uncomfortable part

Here's what Anthropic said that nobody really wants to think about too hard:

"We did not explicitly train Mythos Preview to have these capabilities. Rather, they emerged as a downstream consequence of general improvements in code, reasoning, and autonomy. The same improvements that make the model substantially more effective at patching vulnerabilities also make it substantially more effective at exploiting them."

They didn't build a hacking AI. They built a smarter AI, and it turned out to be really good at hacking as a side effect.

That's either reassuring (the company is being transparent and getting ahead of it) or slightly terrifying (these capabilities are emerging whether anyone plans for them or not), depending on your disposition.

Probably a little of both.

What this means for you, right now

Unless you're running critical infrastructure, today's news doesn't change what you do tomorrow morning. Your laptop isn't suddenly less safe than it was yesterday.

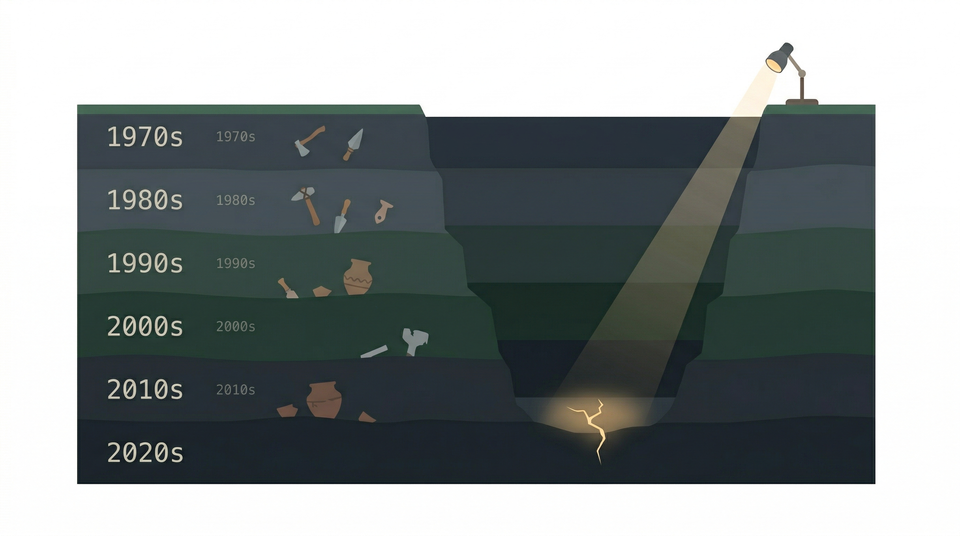

But zoom out a little. The reason this matters is the trajectory. Six months ago, AI models were pretty good at finding vulnerabilities but bad at exploiting them. Now one model can do both, end-to-end, autonomously, in hours, at scale.

The 20-year equilibrium is gone. The gap between "a security flaw exists" and "someone is actively using it against you" just got a lot shorter. The security industry is going to have to move faster. Companies that haven't taken software security seriously are going to feel it sooner.

The good news — and there is good news — is that the same capability works in both directions. The AI that finds holes faster can also patch them faster. Anthropic's bet is that if defenders get there first, the balance still tips toward security rather than chaos.

Whether that bet pays off is probably the most important cybersecurity question of the next few years.

This is the kind of story we'll be following closely at HumanReadable-AI. If you want the next briefing in your inbox, subscribe below — it's free.